RoboFinals Industrial Benchmark

How Early Adopters Are Scaling Model Evaluation for Physical AI

Evaluation Is Becoming

the Bottleneck of Physical AI

the Bottleneck of Physical AI

Robotics foundation models are advancing rapidly. Teams are training increasingly capable policies across diverse tasks, robots, and environments. Yet as these systems scale, one challenge is becoming clear: evaluation is now the primary bottleneck in robotics development.

Many robotics labs are encountering the same pattern. Their models have outgrown nearly all existing academic simulation benchmarks. Models easily surpass these benchmarks, yet teams still lack reliable ways to measure capability, track progress, or compare approaches at the frontier.

In response, teams often fall back to real-world testing. But unlike autonomous driving, robotics has no “shadow mode” equivalent. Meaningful evaluation requires hundreds of physical setups, continuous maintenance, and strict safety procedures. As models scale, this approach quickly becomes impractical.

Evaluation is no longer a downstream validation step.

Training builds capability. Evaluation defines progress.

For Physical AI to advance systematically, robotics teams need scalable infrastructure to measure real improvements across tasks, robots, and environments.

That is the goal of RoboFinals.

Early Adopters Are Already

Using RoboFinals

Across foundation models, humanoid robotics, and industrial automation, RoboFinals is emerging as a shared infrastructure for systematically measuring robot capability.

Examples include:

- Qwen, which uses RoboFinals to run large-scale evaluations of embodied foundation models across diverse tasks and environments.

- Fourier, which evaluates humanoid robot policies under complex interaction scenarios.

- RoboForce, which stress-test industrial robotic policies before deployment.

- Peritas, which applies RoboFinals for safety-critical validation in medical robotics systems.

For these teams, RoboFinals provides something robotics has long lacked: a scalable and repeatable way to evaluate systems before they reach the real world.

RoboFinals-100: An Industrial

Benchmark for Embodied AI

Unlike traditional academic benchmarks that emphasize narrow tasks or simplified environments, RoboFinals-100 focuses on:

- progressive difficulty

- high task diversity

- industry-aligned realism

The benchmark spans multiple real-world domains, including:

- household tasks such as cleaning and organizing

- factory tasks involving part handling and assembly

- retail scenarios including sorting and restocking

RoboFinals-100 also provides comprehensive interaction coverage, including:

- rigid objects

- articulated systems such as cabinets and appliances

- deformable materials including cables, cloth, and liquids

These environments are built on Lightwheel’s SimReady asset ecosystem, ensuring consistent physical behavior across tasks.

The benchmark also supports cross-robot evaluation, enabling models to be tested across:

- tabletop manipulators

- mobile manipulators

- full loco-manipulation systems

This allows robotics teams to evaluate models under conditions closer to real-world deployment.

AutoDataGen: Automated Synthetic

Data Generation

To support this workflow, Lightwheel developed AutoDataGen, an automated simulation data generation pipeline built on top of NVIDIA Isaac Lab.

Its key capabilities include:

- LLM-driven task decomposition: Decomposes high-level tasks into atomic skills starting from task code, scene configurations, or natural-language descriptions

- Isaac Lab integration: Built as an additional package on Isaac Lab with minimal intrusive changes to existing projects

- Unified abstraction: AutoDataGen provides a consistent interface for the entire workflow, making it easy to extend and reuse

- Pluggable modules: Custom decomposers, skills, and action adapters can be swapped in through the registration system

AutoDataGen also integrates with LW-BenchHub, Lightwheel’s scenario and task library, enabling automatic decomposition and execution of benchmark tasks across diverse environments.

Several robotics teams are already using AutoDataGen to automatically generate action data for benchmark tasks before running RoboFinals evaluations.

Together, AutoDataGen provides the automated data generation layer that supports large-scale robotics benchmarking workflows.

Infrastructure That Scales:

The RoboFinals Evaluation Stack

NVIDIA Isaac Lab-Arena is an open-source framework, available on GitHub, that provides a collaborative system for large-scale robot policy evaluation and benchmarking in simulation, with the evaluation and task layers designed in close collaboration with Lightwheel. RoboFinals is built on NVIDIA Isaac Lab-Arena, Isaac Lab-Arena decouples three core components of evaluation:

- environments

- robots

- tasks

This modular LEGO-like architecture enables consistent evaluation across diverse robots and simulation setups.

Lightwheel extends Arena to support:

- complex task logic

- long-horizon workflows

- generalized evaluation protocols for robotics foundation models

To scale evaluation further, RoboFinals integrates with NVIDIA OSMO, NVIDIA’s orchestration platform for distributed AI workloads.

OSMO automates evaluation workflows by managing experiment execution, task scheduling, and distributed policy rollouts across compute clusters.

Combined with scalable cloud GPU environments, including deployments on Nebius GPU clusters, RoboFinals enables thousands of benchmark episodes to run in parallel.

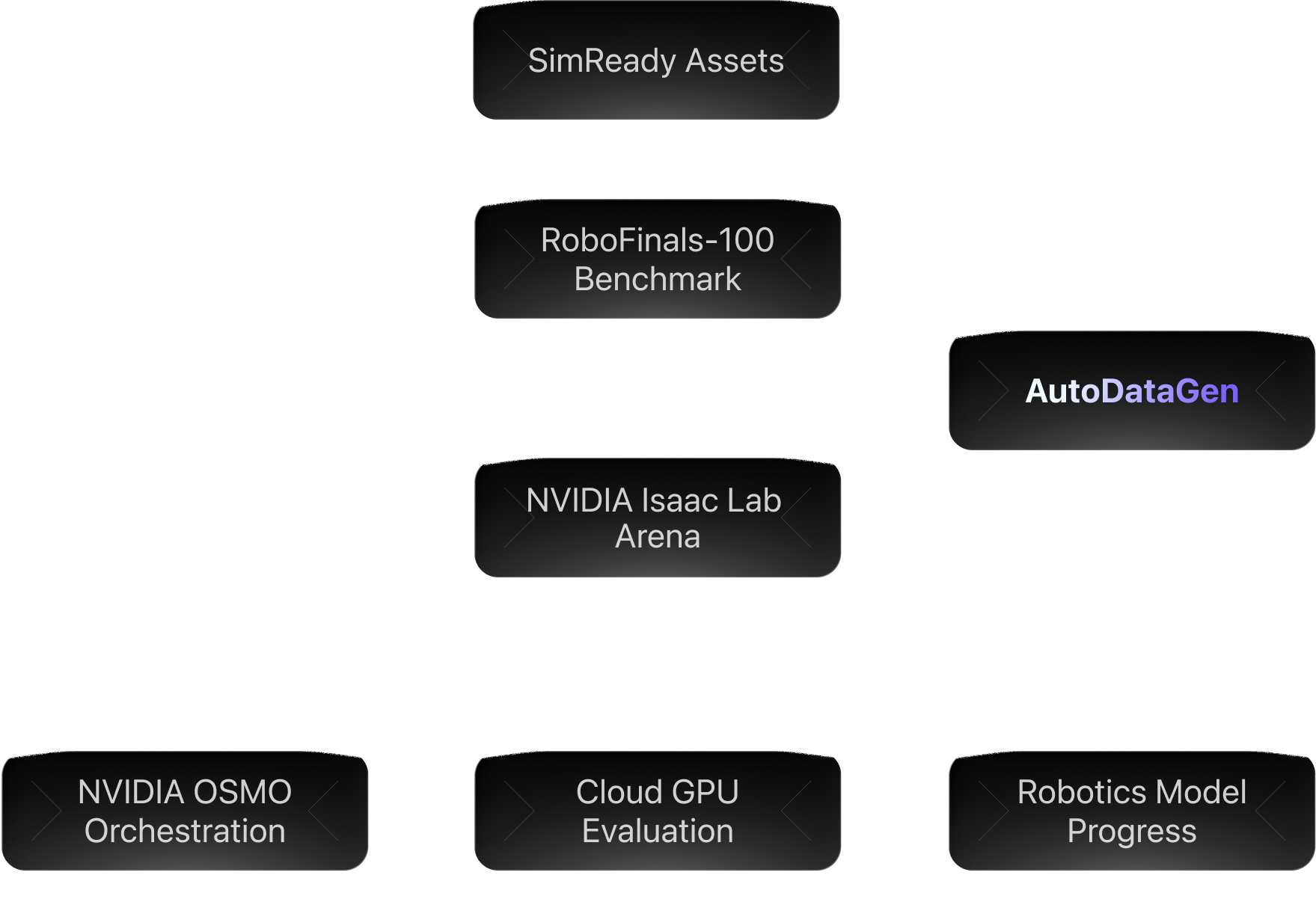

Together, these components form the RoboFinals Evaluation Stack:

This architecture transforms evaluation from a slow, manual process into automated infrastructure for robotics development.

Toward the ImageNet of

Robotics Evaluation

Datasets such as ImageNet provided a common evaluation framework that allowed researchers to measure progress, compare approaches, and identify real breakthroughs.

Physical AI now faces a similar inflection point.

As robotics foundation models scale, the field needs a shared benchmark to measure capability across tasks, robots, and environments. Without this infrastructure, progress becomes difficult to measure and comparisons remain ambiguous.

RoboFinals is designed to fill this gap.

By combining:

- SimReady simulation environments

- RoboFinals-100 benchmark tasks

- NVIDIA Isaac Lab-Arena evaluation framework

- NVIDIA OSMO-powered orchestration

RoboFinals enables systematic, reproducible evaluation at foundation-model scale.

Our ambition is clear:

RoboFinals aims to become the ImageNet of robotics evaluation.

A shared infrastructure where robotics teams can measure progress, compare systems, and accelerate the development of Physical AI.

When evaluation becomes standardized, innovation accelerates.