LW Training Platform

Policy Evaluation in Lwlab

Zero-Dependency Isolation

The policy and environment run in completely independent processes with isolated Python environments. This architecture eliminates the notorious "dependency hell" problem

Policy side

can use any deep learning framework with specific versions without conflicts

Environment side

runs Isaac Lab with its required dependencies independently

Rapid iteration

Update policy models without restarting the heavy simulation environment

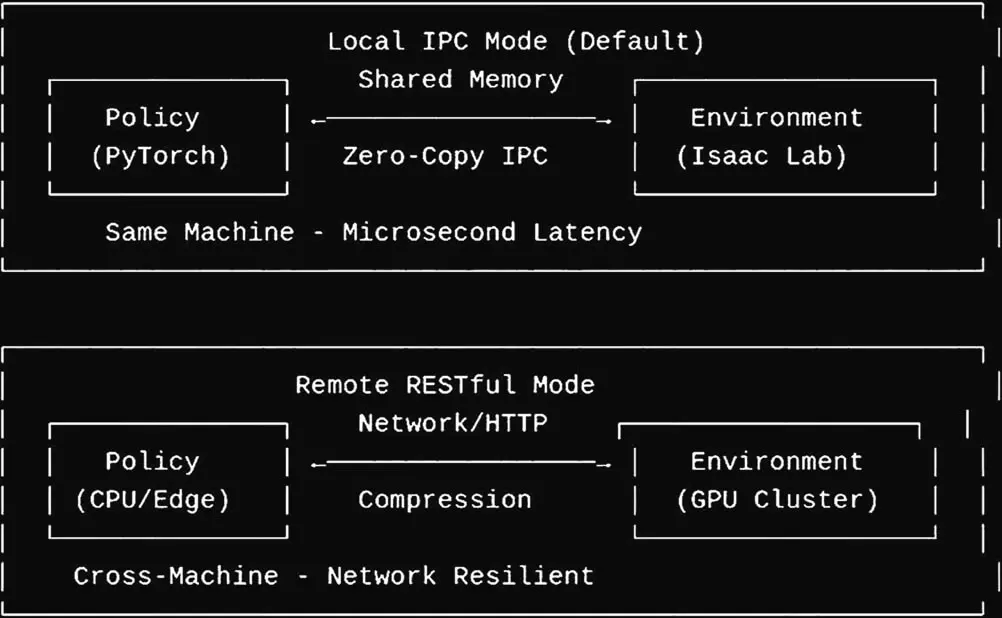

High-Performance Zero-Copy Communication

The framework implements an optimized inter-process communication (IPC) protocol with shared memory for data transfer

Seamless remote environment access

clients interact with remote environments as if they were local, with transparent API calls

Zero-copy data sharing

via shared memory regions - large observation data (multi-camera RGB-D streams) are transferred without serialization

Sub-millisecond latency

for observation-action loops, enabling real-time policy evaluation with negligible overhead

Flexible Deployment Modes

The distributed design supports multiple deployment paradigms